MPEG stands for “Moving Picture Expert Group” and is defined by Moving Picture Expert Group itself, which worked to generate the specifications under “ISO (International Organization for Standardization)” and “IEC(International Electro Technical Commission)” MPEG video compression is used in many current devices which are able to play videos. This includes a very large range of products like DVD players, HDTV(High definition Television),Video Conferencing devices etc.

MPEG application benefits from the fact that it requires less storage space as well as it requires less bandwidth for the transmission of video information from one point to the other. Basically, MPEG is encoding and compression system for the digital visuals.MPEG reduces the amount of the data needed to represent a particular video.

There are various MPEG standards which are discussed below:

MPEG-1 was finalized in 1991, and was originally optimized to work at resolutions of approximately 352×240 pixels at 30 frames/sec (NTSC based) or 352×288 pixels at 25 frames/sec (PAL based). It is often thought that the MPEG-1 resolution is limited to the above sizes, but it in fact may go as high as 4095×4095 at 60 frames/sec. The bit-rate is optimized around 1.5 Mb/sec.

MPEG-2 was finalized in 1994, and handled problems related to digital television broadcasting. Also, the bit-rate was raised to between 4 and 9 Mb/sec, resulting in very high quality video. MPEG-2 consists of profiles and levels. The profile defines the bit stream scalability and the color space resolution, while the level defines the image resolution and the maximum bit-rate per profile.

Probably the most common descriptor in use currently is Main Profile, Main Level (MP@ML) which refers to 720×480 resolution video at 30 frames/sec, at bit-rates up to 15 Mb/sec for NTSC video. Another example is the HDTV resolution of 1920×1080 pixels at 30 frame/sec, at a bit-rate of up to 80 Mb/sec. This is an example of the Main Profile.

MPEG-4 is an ISO/IEC standard developed by MPEG (Moving Picture Experts Group), the committee that also developed previous standards known as MPEG-1 and MPEG-2. These standards made interactive video on CD-ROM and Digital Television possible. MPEG-4, whose formal ISO/IEC designation is ISO/IEC 14496, was finalized in October 1998 and became an International Standard in the first months of 1999.Some work on extensions in specific domains, is still in progress.

MPEG uses a series of frames named as “I(Intra frames)”,”P(Predicted frames)”, “B (bidirectional frames)”. These frames are clustered together to form “GOP (Group of Pictures)”.

An “I” frame is made up of one video frame and it stands all by itself.Intra frame,are coded using only information present in the picture itself. The I-frames can be reconstructed without any reference to other frames.

The P-frames are forward predicted from the last I-frame or P-frame, it is impossible to reconstruct them without the data of another frame (I or P). They are coded with respect to the nearest previous I- or P-pictures. This technique is called forward prediction.

B frames are formed by “I” or “p” frames.B frames can carry not only the difference from the previous frame, but also the difference from the next frame, hence it’s named “bidirectional”. The B-frames are both, forward predicted and backward predicted from the last/next I-frame or P-frame.This frame provides the most compression since it uses the past and future picture as a reference however, the computation time is the largest.

GOP (Group of pictures) sequence begins with an I frame as an anchor and all frames before or next “I frame” are called GOP.There are three different frame types in a GOP

“I” or intra-frames

“P” or predictive frames

“B” or bi-directional frames.

The basic idea of Mpeg algorithm is that the “next frame” in any video picture (having 24 frames per second in pal system) will be very much similar to the previous frame, so it is possible to predict rest all the frames which results in to a final picture as a whole.

ALGORITHM OF MPEG

There are five basic steps involved in MPEG compression algorithm. They are as follows:

1. Colour space Conversion

2. Zig Zag scan and Rle coding

3. Predictive Coding

4. Variable Length Coding

5. Motion Estimation.

In general, each pixel in a picture consists of 3 components Red,Green,Blue.This is knows as RGB colour space.RGB is converted to Y,Cb,Cr(Y is Luminance component ,meaning brightness component and Cb,Cr are the chrominance component ,meaning colour component) space in MPEG. In this more importance is given to the “y” component then the colour component because the human eye notices differences in luminance (brightness) more prominently than differences in color.

RGB is converted to Y,Cb,Cr by the following formulae –

Y= 0.299R + 0.587G + 0.144B

Cb= 0.433(B – Y)

Cr= 0.872(R – Y)

After converting the frame into Y,Cb,Cr space, mpeg breaks each frames into 8×8 pixel blocks and performs a mathematical calculation called DCT(Discrete Cosine Transform) on each block in the frame .DCT packs the information into a much tighter spot which is visible to the human eye.

For example if the data pattern looked like a long string of 1’s and 0’s,after DCT maximum number of 1’s are grouped together,hence more random number of 1’s would not represent important information and also long strings can be compressed more efficiently then the string which is short in length.

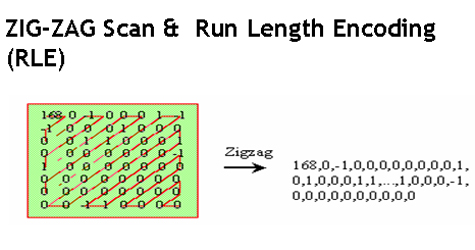

Secondly,Zig Zag scan is done to group the number of 1’s and 0’s .The next step is to remove the redundant data ,for example it is not necessary to transmit long strings of 1’s,instead transmit one symbol which would represent same old long string of 1’s and encode the resultant bit stream as (skip, value) where skip is the number of 0’s and value is the number of 1’s.

Thirdly Predictive Coding is done that is based on the current frame to predict next frame and code their difference (called prediction error).Better prediction will result in high accuracy. If prediction error will be small then we can use fewer bits to encode prediction error than actual value. In MPEG, DPCM (Difference Pulse Coded Modulation) technique is used for predictive coding. On decoding side, decoder will use prediction error and the current frame with it to form the new frame. The values of the frames formed are coded using a coding technique known as “VLC”.

Fourthly, Variable Length Coding which is a coding technique is done. It uses short codeword to represent the value which occurs frequently and use long codeword to represent the value which occurs less frequently. With this method can make the code string shorter than the original data.

In MPEG, the last of all encoding processes is to use VLC to reduce data redundancy and the first step in decoding process is to decode VLC to reconstruct image data encoding and decoding processes with VLC must refer to a VLC code table. This code table has two entries one is original data and the other is the corresponding codeword.

, Motion Estimation is done which is used to predict a block of pixel value in next picture using a block in current picture. The location difference between these blocks is called Motion Vector. When decoder obtains this information, it can use this information and current picture to reconstruct the next picture.